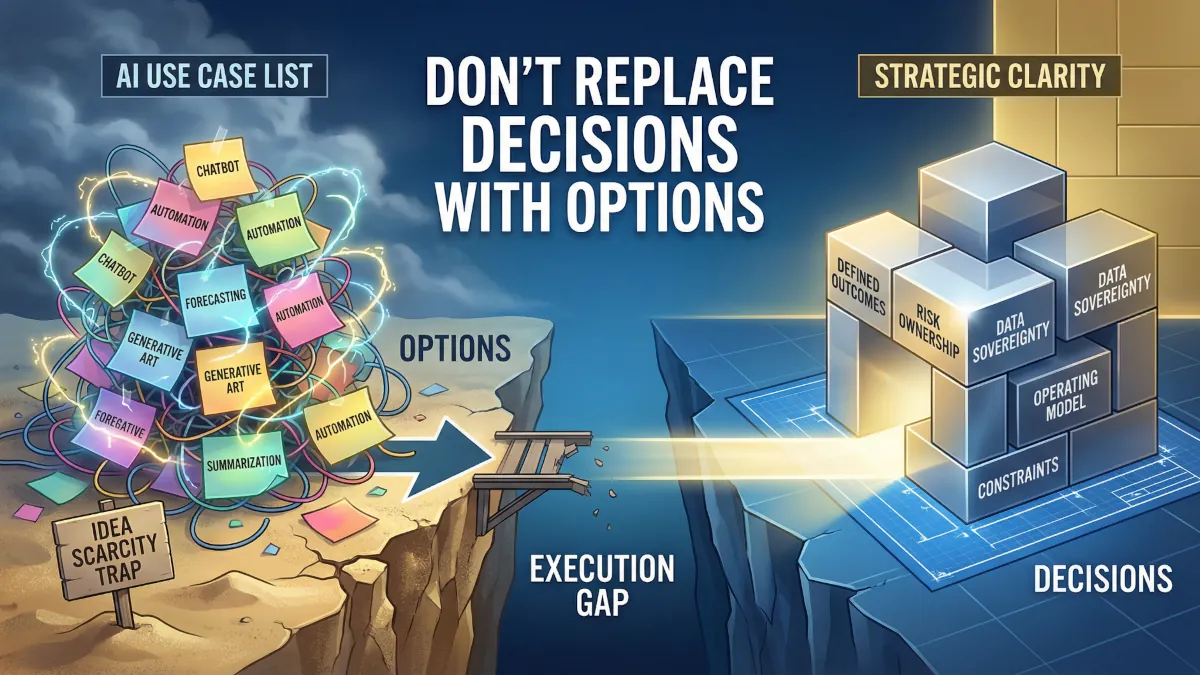

Listing AI use cases is an avoidance mechanism

Most organisations get off course as soon as they create a list of AI use cases.

Most organisations get off course as soon as they create a list of AI use cases.

The list is probably fine. And lists travel well. They create something a board can read, something an executive can sponsor, and something a team can populate quickly. In a pressured environment, “show me the use cases” feels like the most responsible question in the room.

But it’s a trap.

Pressure creates the wrong starting line

Boards and executives are being asked to spend money, take risk, and defend decisions in public. AI is the backdrop, but the pressure is older than AI. It is the pressure to surface. Something visible. Something that signals motion.

In that environment, “show me the use cases” becomes the safest demand in the room. It sounds concrete. It looks managerial. It creates artefacts that can be counted and presented.

A list also gives people a way to avoid the most uncomfortable part of the conversation. It creates the appearance of forward movement without forcing agreement on what matters, what must be protected, and what is allowed to break. It produces progress theatre without anyone intending it.

I’m not saying that use cases are useless. I’m saying that use cases are the wrong place to start.

Idea scarcity is not the constraint

When leaders push for use cases early, the stated fear is that the organisation lacks ideas. That AI is new, and the enterprise needs to be creative. That the job is to “unlock” imagination and crowdsource opportunity.

In most organisations, the opposite is true. Ideas are abundant. People can produce them on command. They will produce them faster when they sense executive attention and budget.

What is scarce is not imagination. It is decision quality under competing priorities. It is clarity about outcomes, constraints, ownership, and trade offs.

The use case request becomes a proxy for leadership work that has not yet been done. It shifts the burden from executives to teams. It turns a question of enterprise intent into a question of individual suggestion.

That is why the same organisations can generate fifty use cases in a fortnight and still struggle to deliver a single scalable outcome six months later.

Use cases follow clarity and do not create it

Use cases are outputs of clarity, not inputs.

Clarity means the organisation knows what it is trying to achieve in business terms, what it is willing to give up to achieve it, and what risks it will not take. Clarity means someone can say, without defensiveness, “This is what we are optimising for,” and the room can back that decision.

When clarity is present, use cases emerge naturally. They are not brainstormed. They are derived. The question is not “What could we do with AI?” The question becomes “Given what we have decided matters, where does the current system fail to deliver that outcome, and what intervention changes the decision quality or cycle time?”

Starting with use cases skips the hard decisions about priorities, constraints, and outcomes. It invites teams to build proposals in a vacuum. It rewards novelty over fit. It treats AI as the story, when the story is the organisation’s ability to decide and execute.

Without clarity, a use case list is not a plan. It is a catalogue of unresolved arguments.

Use case lists grow faster than value

There is a predictable pattern once the use case machine starts.

Every executive sponsor wants their area represented. Every function has a backlog of frustrations that AI seems like it could relieve. Every vendor presentation adds fresh vocabulary. Every internal demo becomes a new entry on the list.

The list expands because it is easier to add than to subtract. Addition is politically safe. Subtraction forces an explicit choice, and explicit choices create losers. In a pressured environment, most organisations avoid creating losers, even when the avoidance creates broader loss later.

Value does not scale at the same rate as ideas because value requires coherence. It requires a small number of outcomes that can absorb cross-functional effort and justify the hard work of data alignment, risk treatment, process redesign, and change.

A long list also changes behaviour. Teams learn that their job is to produce options, not results. Leaders learn to sponsor themes, not decisions. The organisation becomes busy, not effective.

The most damaging outcome is not wasted effort. It is diluted focus. When everything is a potential use case, nothing is a priority.

Idea-first thinking fragments the organisation

Use case thinking feels inclusive. It invites participation and makes people feel heard. The cost is that it creates fragmentation before the organisation has agreed on the ground rules.

Each use case is interpreted through a local lens. Sales frames it as growth. Finance frames it as control. Risk frames it as containment. Operations frames it as efficiency. IT frames it as architecture. HR frames it as capability. Everyone is rational. Everyone is also pulling in different directions.

The fragmentation shows up in small choices that later become structural problems. Different data definitions. Different measures of success. Different interpretations of “approved.” Different procurement paths. Different security postures. Different vendors. Different operating rhythms.

The organisation ends up with multiple AI initiatives, each with its own truth, each too small to justify enterprise enablement, each large enough to create integration pain.

This is why early use case catalogues often become the seed of future complexity. They are not neutral artefacts. They are an allocation mechanism, and they allocate ambiguity.

Quick wins stall when they meet ownership and risk

A business unit runs a two-week sprint to deliver an AI “quick win.” It is well intentioned and often well executed. A small team builds a prototype that looks impressive in a demo. It drafts emails faster, summarises meetings, suggests responses, produces a dashboard narrative. People clap. The board pack gets a screenshot.

Then it hits the enterprise.

The moment the tool touches live data, questions appear that were not answered at the start. Who owns the data being used. Who is accountable for errors. What information is allowed to leave the environment. What logging is required. What model behaviour is acceptable in regulated interactions. What happens when the output is wrong, biased, or confidently incomplete.

The quick win team is not equipped to resolve those questions because they are not engineering questions. They are governance questions and operating model questions. They belong to leaders, not prototypes.

So the work stalls. The prototype lives in a sandbox. The team either moves on to the next quick win or becomes trapped in a slow negotiation with security, legal, data, and compliance. The business begins to think IT is blocking progress. IT begins to think the business is reckless. Risk becomes the villain. Trust erodes.

The quick win did not fail because AI is hard. It failed because the organisation tried to scale a solution before it decided how to own the problem.

Leaders miss the decision that the use case list avoids

The blind spot is believing the list is the decision.

A use case list feels like governance because it can be reviewed, ranked, and funded. It creates the illusion of control. In reality, it delays the decisions that make delivery possible.

The decisions that matter are not about which use case is most exciting. They are about what the enterprise will optimise for, what constraints are non-negotiable, and what trade offs are acceptable.

These are decisions about consequences. Consequences for customer experience, for risk appetite, for workforce design, for vendor dependency, for data sovereignty, for auditability, for resilience.

When leaders avoid those decisions, they still pay for them. The cost just arrives later, disguised as delivery friction.

The other part of the blind spot is mistaking visible activity for organisational learning. A hundred pilots can teach less than one properly governed deployment, because pilots allow the enterprise to keep its current assumptions intact. Deployment forces the enterprise to confront reality.

Months later, the second-order cost becomes structural

Six months after the use case push, many organisations find themselves in a similar position.

They have several pilots, a set of scattered tools, and a growing sense of fatigue. They have spent money, but the business cannot point to sustained performance change. People become cynical. The board becomes more demanding. Executives become more defensive. The organisation becomes less willing to take the next risk, even when it should.

Meanwhile, the structural costs accumulate quietly.

Security and risk teams are now dealing with a patchwork of controls. Data teams are managing ad hoc integrations. Architecture is negotiating exceptions. Procurement is managing multiple small contracts. Leaders are mediating conflict between functions that believe they are protecting the enterprise.

The organisation’s ability to make clean decisions gets worse, not better. Every future AI decision becomes harder because the environment is noisier, not clearer. The next platform choice is forced under pressure. The next governance change is framed as a correction rather than a deliberate design.

This is the irony. The use case list, built to show progress, can end up reducing the organisation’s capacity to progress.

Clarity changes decisions and shrinks the list to what matters

When clarity returns, the dynamic shifts.

The organisation stops treating AI as a search for ideas and starts treating it as an environment that exposes weak decisions. The focus moves from “What can we build?” to “What outcomes must improve, and what must remain true while we improve them?”

With that clarity, the use case list becomes smaller and sharper. It stops being a catalogue and becomes a set of implications. Some items disappear because they were never aligned to outcomes. Others collapse into a single coherent initiative because they were fragments of the same underlying problem.

Investment conversations also change. Funding is no longer justified by the number of use cases or the quality of demos. It is justified by the outcome, the constraints, and the ability to execute without creating downstream mess.

Teams are still creative, but their creativity is bounded by enterprise intent. Innovation becomes useful rather than noisy. Risk is treated as design input rather than late-stage veto. Quick wins either connect to a scalable path or they are not pursued.

Use cases still exist. They just arrive at the right time, as the product of decisions that leaders have already made.

Solving the Right Problem First

By the way, if your AI agenda feels busy but not decisive, you are likely solving the wrong problem first.

This is how we have helped our customers:

- Clarity on what's worth doing: Define the problem and the trade offs before anyone builds.

- Deliver targeted initiatives: Integrate solutions that tie directly to outcomes, not experiments.

- Sustain momentum with capability: Leadership, governance, and operating discipline that make gains stick.

The best place to start is a simple AI Opportunity Audit.